Vercel Breach Shows How Unsanctioned AI Tools Open Doors to Customer Data

How a compromised third‑party AI tool led to a Vercel data exposure, what defenders should do, and what to watch next.

TL;DR: A Vercel employee’s unsanctioned AI tool gave attackers a foothold, exposing non‑sensitive environment variables for a limited customer subset. The incident highlights the growing risk of shadow AI and the need for tighter third‑party app governance.

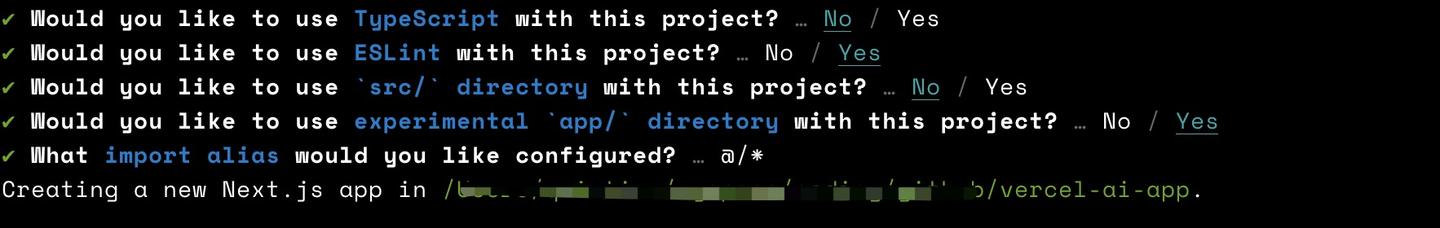

Context: Vercel, a U.S. cloud platform for frontend frameworks, detected unusual activity in April 2026 linked to a third‑party AI service. The service was a consumer version of Context.ai that an employee had connected to their corporate Google Workspace account without IT approval. Attackers compromised that connection, stole OAuth tokens, and moved laterally into Vercel’s internal network.

Key Facts: The breach began when the compromised AI tool abused valid cloud credentials (MITRE ATT&CK T1078.003) to access internal repositories and customer‑related data. Vercel’s security team spotted anomalous API calls on April 12, 2026, and contained the intrusion within 48 hours. Investigation showed that a limited subset of customers—fewer than 5 % of the total base—had non‑sensitive environment variables exposed; no passwords or payment data were leaked. The attacker used the third‑party tool as a supply‑chain vector (T1195.002) to bypass perimeter defenses, illustrating how shadow AI expands the attack surface.

What It Means: Many firms track approved vendors but ignore the ad‑hoc web of employee‑added apps, creating a blind spot that attackers exploit (Fact 2). Vercel’s case shows that even a single unsanctioned AI integration can lead to data exposure, triggering customer‑notification delays and reputational harm. Defenders should enforce centralized approval for AI tools, monitor OAuth grants with a CASB, and require MFA for all cloud accounts. Recommended actions include: (1) inventory all third‑party app connections quarterly; (2) block consumer‑grade AI apps unless vetted; (3) enable detection for abnormal token usage (e.g., MITRE ATT&CK T1078.003 alerts); (4) rotate compromised credentials and review least‑privilege scopes. Organizations should also update incident‑response playbooks to include shadow‑AI scenarios and consider adopting CISA’s Secure Cloud Business Applications (SCuBA) guidance for OAuth risk management.

What to watch next: Expect more guidance from CISA and NIST on governing generative AI use, and watch for emerging regulations that may require formal shadow‑IT disclosures, including potential impacts from the EU AI Act on enterprise AI governance.

Continue reading

More in this thread

Everest Claims 3.4M Citizens Records, 250K Frost SSNs; ZeroFox Points to Shared Vendor Breach

Peter Olaleru

US Charges Two Chinese Nationals in Myanmar Scam Compound Case; FBI Cites $7.2B Losses

Peter Olaleru

Kyber Ransomware First to Deploy Quantum‑Resistant ML‑KEM Encryption

Peter Olaleru

Conversation

Reader notes

Loading comments...