Quantum Computing Researchers Show How to Feed AI Data Bit‑by‑Bit, Unlocking Massive Memory Advantage

New research details a method to feed quantum computers data bit by bit, overcoming memory limitations. This approach offers a massive memory advantage for AI and large datasets.

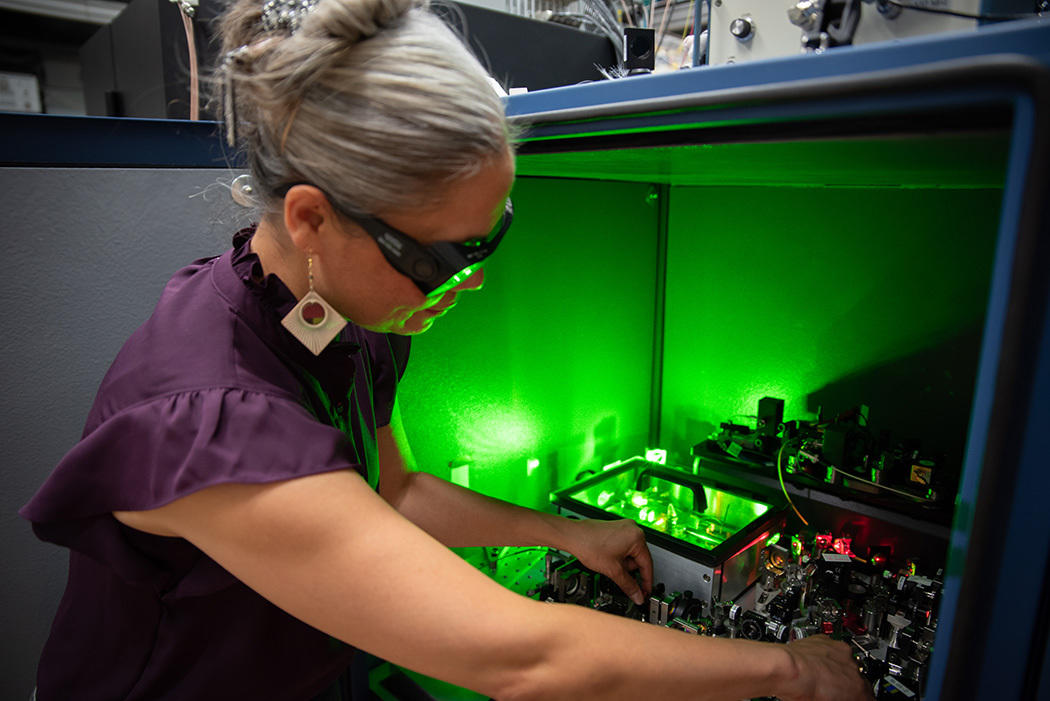

A researcher wearing safety glasses reaches into a box of circuitry and other equipment, which emits a green glow.

**TL;DR** Researchers have developed a method to feed quantum computers data bit by bit, overcoming previous memory hurdles. This approach unlocks a significant memory advantage, paving the way for quantum computers to process massive artificial intelligence datasets.

Machine learning, a core component of artificial intelligence, underpins applications across science, technology, and daily life. As datasets grow larger, the demand for processing power intensifies. Quantum computing offers a potential solution, promising to handle calculations beyond the reach of conventional machines. However, a major challenge has been efficiently inputting the vast amounts of non-quantum data needed for AI algorithms into a quantum system.

Historically, researchers believed that all data needed to be stored in impossibly large memory devices before quantum processing could begin. A team led by Hsin-Yuan Huang at Oratomic now proposes a new strategy. Their work demonstrates that feeding data to a quantum computer bit by bit is sufficient, preventing system overload and eliminating the need for vast pre-storage, much like streaming a movie instead of downloading it entirely.

This innovative method provides a significant memory advantage. A quantum computer utilizing approximately 300 logical qubits—error-proof building blocks for quantum computation—could surpass the processing power of a classical computer built using every atom in the observable universe. The quantum machine is inherently powerful, but it relies on efficient data input to leverage its capabilities.

This breakthrough suggests quantum computing can be applied effectively whenever massive datasets are available, addressing a key barrier to quantum AI. Even with fewer qubits, a 60-logical-qubit computer, potentially achievable this decade, could offer a notable advantage for specific large-dataset AI tasks. While questions remain regarding real-world application and potential “dequantisation” (adapting quantum algorithms for classical hardware), the new method could benefit fields like large-scale scientific experiments where vast data is often discarded due to memory constraints. Researchers are now working to expand compatible algorithms and increase quantum computer processing speeds.

Conversation

Reader notes

Loading comments...